Semi automation and software testing

The middle way

6 Oct 2018

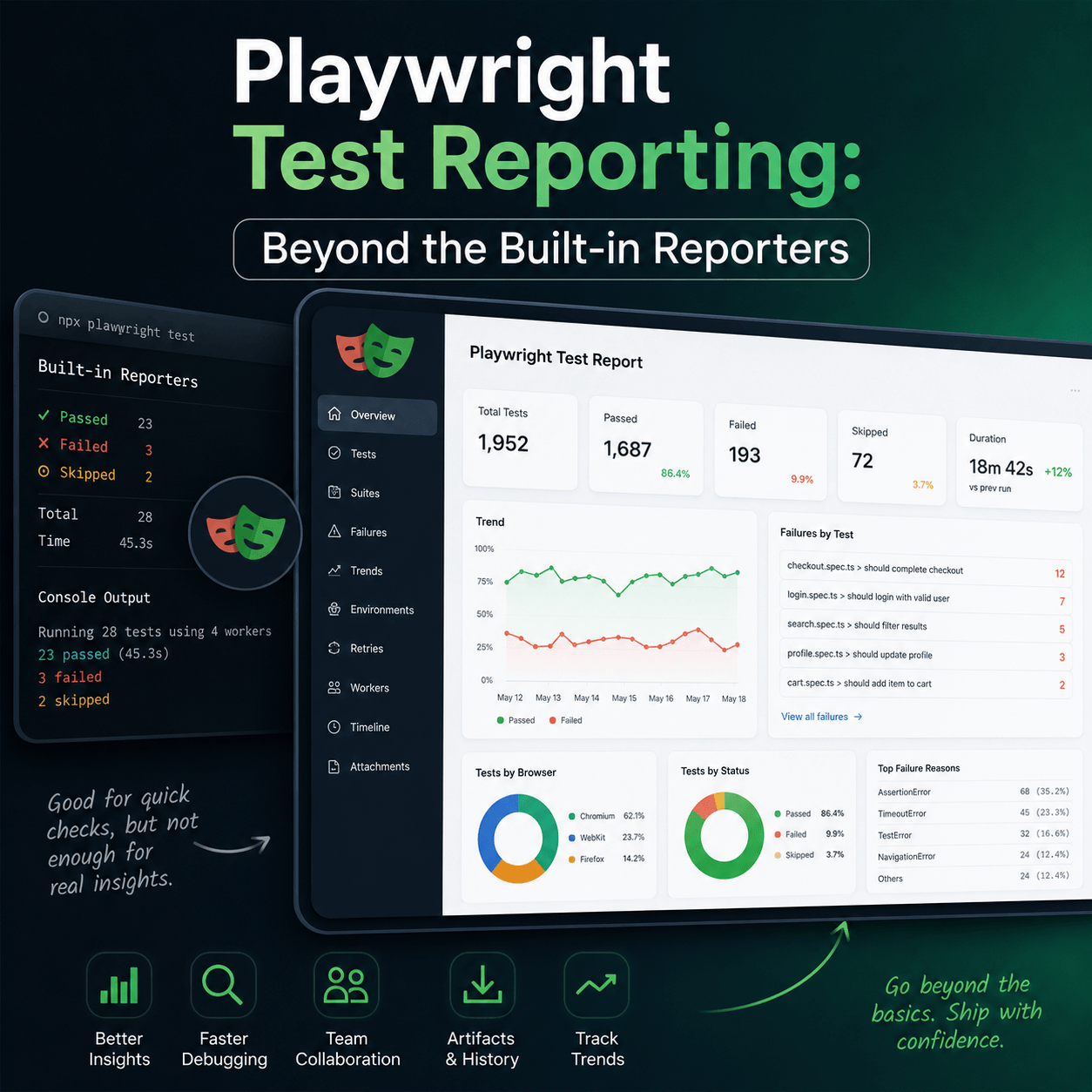

When there are test cases that can not be totally automated it is often possible to use semi automation to help development teams save time on testing.

What is semi automation?

Semi automation involves automating part of a test case only and then utilizing a manual process to complete the test case. Automation may be applied at the beginning of the test, at the end or somewhere in-between. Often, automation is used early in a test process to generate some sort of data output that a human tester can then examine and assign a pass or fail outcome.

UI verification example

As an example, suppose a team has an application with a web front-end. The front-end must be responsive for different screen and browser widths. The Bootstrap framework is a popular example of having a grid system that adjusts content layout based on up to five different width intervals. The development team may want to test that the content on every page looks great for all five supported width ranges across all the browsers the product supports. At the same time the organization may have a visual design guideline that also needs to be considered and the application should be checked to ensure it is adhering to this.

To perform this set of tests a manual tester would have to adjust the width of the browser to a specific size and then open every page in the application (including modal views) and check everything appears correct. Then they would need to adjust the width again and do the same again, opening every page of the application, repeated five times in total. That is not all. Suppose the application supports Chrome, Firefox, Safari, Edge and Opera. The tester must now repeat all of the above again in each browser. In total that is 5 browsers x 5 widths x number of pages that must be checked. Assuming it’s a small application with only 10 pages, that is still 250 different views that must be navigated to and examined on every release. The navigation alone would take a significant amount of time. Full automation of this is difficult to achieve due to the visual subjectiveness of assigning a pass or fail result. However, assuming a test harness has been created for driving the browsers with Selenium, automating everything but the verification itself is possible. By automating changing the width, navigating to every page, with every browser and taking a screen capture of each window, these images can then all be submitted to a human test team for manual verification. Flicking through a set of images in succession is much better than having to performing hundreds or thousands of actions to get at these views and then perform verification. This sort of semi automation is a great first step at saving test time and allowing faster releases on every iteration. The semi automation can be adjusted later to make it more automated as required. To add further automation to the image example, consider that image comparison software can be used to test whether an existing screen capture has already been verified by a human in the past. If it has, then a pass or fail outcome can automatically be assigned without having to submit the image to a human tester. This procedure provides a massive return on investment for regression testing.

How Tesults facilitates semi automation

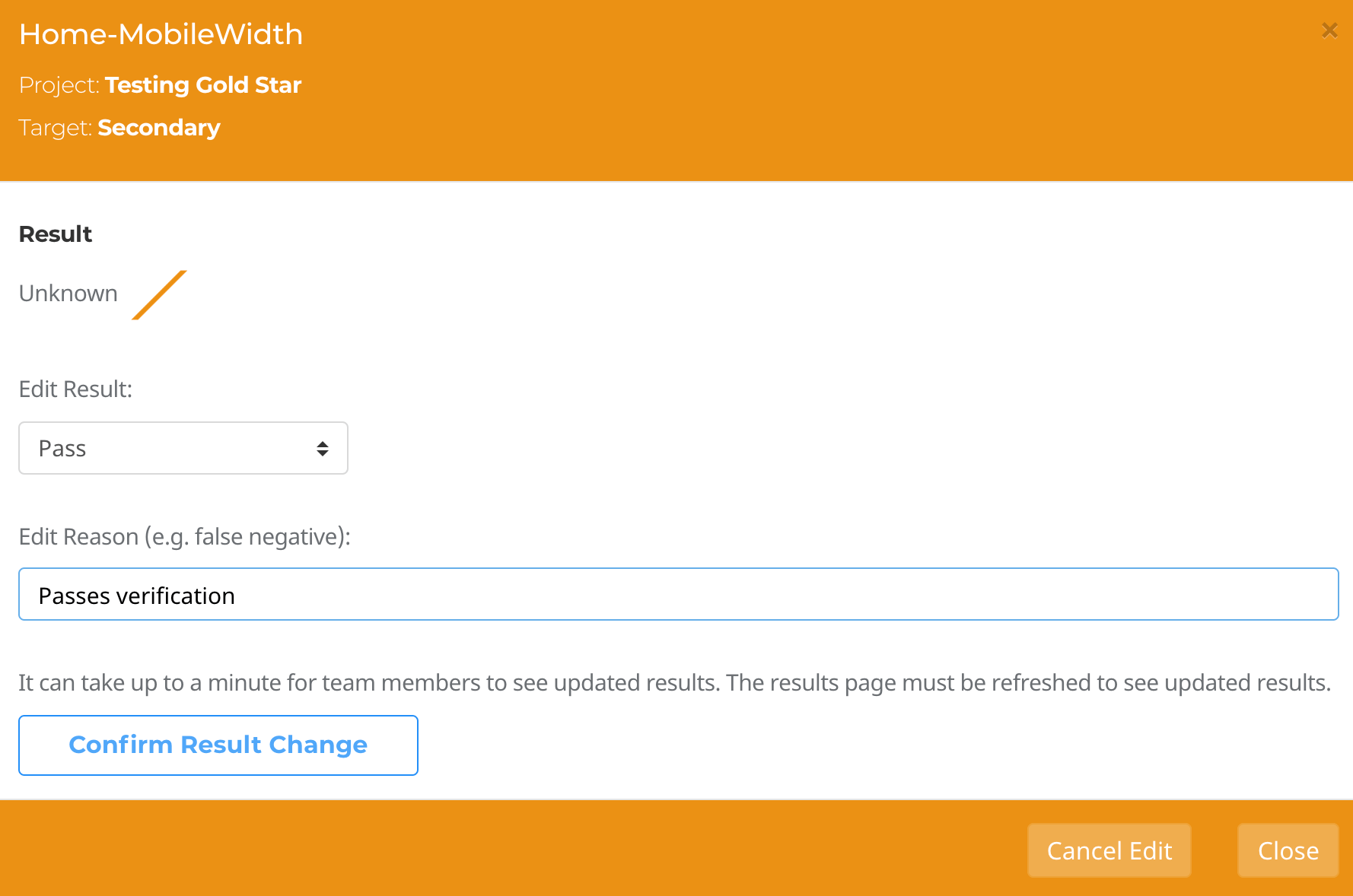

When writing semi automated tests that require human verification to confirm a pass or fail result, such as in the UI verification example, the semi automated test result should be set to the ‘unknown’ result outcome rather than a ‘pass’ or ‘fail’ result when the automated results upload takes place. The results will then appear on Tesults as unknown results and human testers can examine each test case. If there are screen captures that require examining this is a fast process because Tesults displays images inline for each test case. The supplemental results view even provides all images for a test run in a carousel that can be quickly examined. After checking the images the human tester can then edit the test case result outcomes to assign a pass or fail result.Edit:

Completed:

Once this process is finished the human tester can optionally choose to trigger a notification by email or to a Slack channel to make the rest of the team aware of the completed test run.

A middle way

Semi automation offers a sensible and pragmatic middle way between manual and automated testing in cases where full automation is not possible or the potential return on investment for a highly complex automation system is poor. Rather than the binary options of automating or not automating, semi automation offers degrees of automation that can have a significant impact on test efficiency.

By Ajeet Dhaliwal